For decades, commercial real estate operated on a simple axiom: location, location, location. The right address determined the right price. Data centers were no exception — proximity to fiber networks, population centers, and enterprise clients drove site selection decisions for most of the industry’s history.

That axiom is being retired.

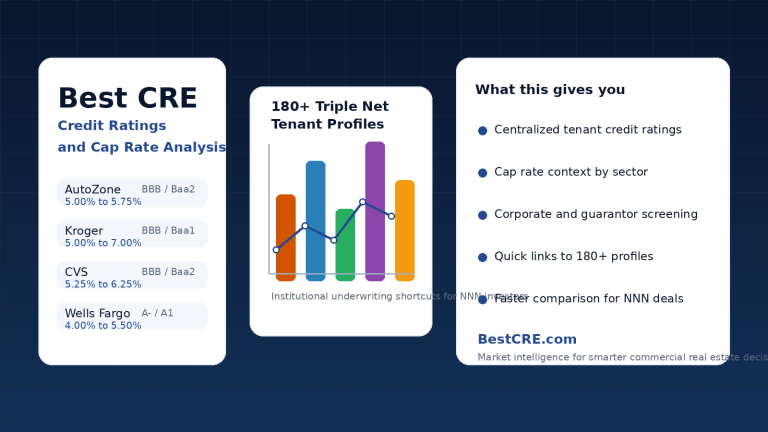

In 2026, the defining variable for data center real estate is not where a facility sits on a map. It is whether the site can be powered, on a timeline that a tenant can actually underwrite. Power availability — specifically, deliverable megawatts with a credible interconnection schedule — has become the master constraint that determines which markets grow, which projects pencil, and which developers can compete. For investors, operators, and practitioners trying to understand where capital is moving in commercial real estate AI, the data center sector is the clearest place to start. It sits within CRE Asset Classes, one of the 20 sectors BestCRE tracks across the commercial real estate AI landscape.

The Numbers Are Not Subtle

U.S. data center vacancy has fallen below 2 percent across primary markets, according to CBRE’s North America Data Center Trends report — the tightest conditions in at least twelve years. Pre-leasing activity tells the same story from a different angle: roughly 74 to 80 percent of all capacity currently under construction is already committed before a single rack is installed. Hyperscalers and AI infrastructure operators are not waiting for certificate of occupancy. They are signing leases on buildings that exist only in a permitting file and a power application queue.

Rental rates in the sector have grown 50 percent since 2022. That kind of appreciation does not occur in traditional commercial real estate sectors. What makes it more striking is that rents are not denominated in dollars per square foot the way an office or industrial lease would be — they are denominated in dollars per kilowatt of capacity per month. The product being sold is not space. It is powered infrastructure.

The top five hyperscalers — Amazon Web Services, Microsoft Azure, Google, Meta, and Oracle — are projected to spend approximately $602 billion on capital expenditures in 2026, a 40 percent increase over the prior year. McKinsey estimates that $5.2 trillion will be deployed into AI-dedicated data center infrastructure globally by 2030. These are not projections built on optimism. They are downstream of committed AI spending that has already been announced and in many cases contracted.

Why Power Beat Location

The grid did not anticipate AI. Traditional cloud computing consumed power in patterns that were relatively predictable and relatively modest — workloads fluctuated, utilization ebbed and flowed, and data center operators could plan infrastructure around average loads rather than peak sustained demand. AI training and inference workloads behave differently. They are continuous, dense, and thermally aggressive. A rack that once consumed 20 to 40 kilowatts now needs to handle 120 to 140 kilowatts to support modern AI architecture. That is a threefold to sevenfold density increase, and the cooling infrastructure required to manage that heat load — primarily liquid cooling systems, increasingly direct-to-chip configurations — is substantially more capital-intensive than the air-cooled systems that characterized the prior generation of data centers.

Grid interconnection timelines in major markets have stretched to five to seven years. Substations are tapped. Transmission upgrades require regulatory approval that moves at the speed of utility commissions, not the speed of hyperscaler capex cycles. In that environment, the site that already has a secured power purchase agreement and a near-term energization date is not just preferable — it is a scarce asset with pricing power that mirrors commodity scarcity more than real estate scarcity. In constrained metros, colocation behaves less like a real estate product and more like a power access product, because the hardest thing to secure is not land — it is deliverable megawatts on a timeline customers can underwrite.

This dynamic has reshuffled the competitive map in ways that would have been difficult to predict five years ago. Northern Virginia, which has historically dominated U.S. data center development, is now facing the same constraints it once exploited — land is tighter, power queues are longer, and specialized labor for construction is in short supply. Markets like West Texas, parts of the Midwest, and rural areas with access to renewable generation or gas pipeline infrastructure are seeing gigawatt-scale pre-leasing activity that would have been implausible in a prior era.

The Geography Is Shifting — But Not Permanently

Secondary and tertiary market expansion is a direct response to primary market constraints. Developers who cannot secure power in Northern Virginia are looking at Ohio, Georgia, Wisconsin, and the Carolinas. Some are co-locating near nuclear plants. Others are pursuing behind-the-meter generation strategies — running natural gas turbines or fuel cells as primary power sources with the grid as backup — to sidestep interconnection queues entirely. Vantage’s $15 billion Stargate commitment in Wisconsin is an example of the scale at which these alternative strategies are being pursued.

But the secondary market migration is not a permanent geographic shift. As AI applications evolve from compute-heavy training workloads toward real-time inference — the kind of AI that runs in consumer and enterprise products, responding to queries in milliseconds — latency becomes a constraint again. Inference workloads need to be close to users. That will eventually pull development back toward population centers, creating a second wave of demand in markets near major metros that can balance grid access with geographic proximity. The markets best positioned for that second wave are not the same ones dominating the current training buildout.

For practitioners evaluating the best CRE industrial real estate opportunities alongside data centers, this geographic evolution matters. Secondary markets absorbing data center development are often the same markets where industrial fundamentals are being tested by shifting supply chains and energy infrastructure investment. The two sectors are competing for some of the same land, labor, and grid capacity.

Capital Structure Is Adapting to a New Risk Profile

The financing landscape for data centers has changed as substantially as the operational landscape. CMBS issuance for data centers hit an all-time high of approximately $4.5 billion in Q1 2025 alone, led by Switch’s $2.4 billion deal and QTS’s $2.05 billion transaction. Banks are approaching concentration limits, creating pressure toward 144A debt structures — a shift from relationship-driven private placement lending toward broader capital markets with different pricing dynamics and investor expectations.

What makes data center underwriting genuinely different from traditional real estate underwriting is the layering of execution risk. A conventional office or industrial project carries construction risk, lease-up risk, and interest rate risk. A data center project carries all of those plus power delivery risk, technology obsolescence risk, and increasingly, community opposition risk. GPU refresh cycles run on three to five year timelines — far shorter than the 30 to 50-year economic life of the facility itself. Twenty-five proposed data centers were canceled in 2025 due to local opposition, grid constraints, and rising costs. Arizona’s governor has moved to remove tax incentives for data centers to slow grid pressure in that state.

Investors are pricing these risks differently than they priced traditional real estate risk. The locus of value has shifted from tenant diversification — the traditional REIT logic of spreading rent roll across multiple occupants — to power assurance. A single hyperscale tenant with a multi-year take-or-pay lease structure and a creditworthy balance sheet is now the preferred profile, because the certainty of their power commitment is what makes the project financeable.

The 9AI Framework, which we use at BestCRE to evaluate CRE AI platforms, includes signal layers around how AI tools process dynamic, unstructured, and fast-moving data. That same analytical lens applies to data center underwriting. In a market where the underlying inputs — power availability, interconnection timelines, utility commitments — are imprecise and rapidly shifting, the advantage goes to the party who can synthesize those signals fastest and act before the window closes.

What AI Is Doing to Its Own Infrastructure

There is a productive irony embedded in the data center story. Artificial intelligence — the technology driving unprecedented demand for physical computing infrastructure — is simultaneously being deployed to manage that infrastructure more efficiently. AI-driven data center infrastructure management tools are automating maintenance scheduling, predicting equipment failures before they occur, and fine-tuning power and cooling in real time. Digital twin technology allows operators to simulate configuration changes and load scenarios before implementing them in production environments where downtime is contractually costly.

This creates a feedback loop worth understanding. The better operators get at using AI to optimize their facilities, the more efficiently they can run high-density AI workloads, which generates more revenue per megawatt, which improves underwriting, which attracts more capital, which funds more development. The sector is not just a beneficiary of AI demand. It is actively using AI to become a better version of itself.

That loop creates a useful evaluative lens for the CRE practitioners, capital allocators, and technology buyers following this space. The question is not simply whether data centers are a good investment — at sub-2 percent vacancy with 80 percent pre-leasing on new construction, the current fundamentals answer that question. The more interesting question is which participants are using AI-native tools to gain durable operational advantages, and which are still running on legacy infrastructure management approaches that will become competitive liabilities as density requirements continue to escalate.

M&A Is Coming, and Quickly

One signal worth watching closely: nearly every major investment banking team was present at the 2026 Power, Technology, and Construction conference — a gathering that has not historically drawn that level of financial advisory attention. With single-digit vacancy, available capital, tangible demand, and a strong preference for portfolio creation over single-asset investment, the conditions for significant M&A activity in the sector are in place. Expect consolidation among mid-tier operators and forward commitments structured as acquisition vehicles rather than traditional development partnerships.

Deal structures are already adapting. Multi-year leases with creditworthy hyperscale tenants continue to anchor underwriting, while asset-backed securities have become a baseline financing tool for stabilized assets, enabling developers to recycle capital efficiently. Third-party infrastructure developers are emerging as a distinct capital segment — willing to shoulder part of the construction and power delivery burden in exchange for preferred equity or structured returns that don’t require them to own the operating business long-term.

Where This Leaves Capital in 2026

The data center sector in 2026 is not a discovery opportunity. It is a durability opportunity. The investors and developers who are best positioned are not those who spotted data centers before the crowd — that window closed several cycles ago. They are the ones who have secured power infrastructure in the right markets, built relationships with utilities at the executive level rather than the procurement level, and structured deals with enough flexibility to absorb the technology refresh cycles that are baked into this asset class.

For those approaching from the best CRE office market angle — evaluating where enterprise occupiers are making long-term infrastructure commitments — data center demand from those same enterprises creates an indirect but real linkage. Companies building AI into their core operations are simultaneously making decisions about physical office footprints and computing infrastructure, and those decisions are not independent of each other.

The short version of the data center thesis in 2026 is this: power is the product, megawatts are the currency, and the competitive moat belongs to whoever can deliver powered capacity on a timeline their tenants can actually use. That is not a real estate story in the traditional sense. It is an infrastructure story that happens to wear a real estate jacket. Understanding the distinction is the first step toward deploying capital intelligently in the sector — or evaluating the AI platforms being built to help practitioners do exactly that.

BestCRE exists to map commercial real estate AI honestly — the platforms worth paying for, the ones you can replicate yourself, and the market forces shaping where capital is moving. Coverage spans 20 sectors and is evaluated through the 9AI Framework. If you’re deploying capital, advising clients, or building in CRE, this is the resource built for you.

Frequently Asked Questions

What is the biggest constraint on data center development in 2026?

Power availability is the primary constraint, not land or capital. Grid interconnection timelines in major U.S. markets have stretched to five to seven years, and the gap between demand for powered capacity and the ability to deliver it is widening. Developers are pursuing alternatives including behind-the-meter generation, nuclear co-location, and secondary market expansion to access power faster than traditional interconnection allows.

Why are data center rents measured in dollars per kilowatt rather than dollars per square foot?

Because the scarce commodity being leased is not physical space — it is powered infrastructure. As AI workloads drive rack density from 20 to 40 kilowatts per rack toward 120 to 140 kilowatts, the ability to deliver and sustain that power load becomes the core value proposition. A facility’s square footage matters far less than its megawatt capacity and the certainty of its power delivery timeline.

Which U.S. markets are seeing the most data center activity in 2026?

Northern Virginia, Dallas, Phoenix, Chicago, and Silicon Valley remain the most active primary markets, though all face tight vacancy and power constraints. Secondary markets including Ohio, West Texas, Wisconsin, Georgia, and the Carolinas are absorbing significant new development driven by land availability, lower energy costs, and shorter interconnection timelines. Markets near nuclear plants are also attracting interest as operators seek carbon-free power outside the traditional grid.

How is AI being used inside data centers themselves?

Data center operators are using AI-driven infrastructure management tools to automate maintenance scheduling, predict equipment failures before they occur, and optimize power and cooling in real time. Digital twin technology allows operators to simulate load changes and configuration updates before applying them in live environments. These tools allow higher utilization of high-density AI workloads while reducing operational risk and labor requirements.

What makes data center underwriting different from traditional CRE underwriting?

Data center deals carry execution risk layers that do not exist in conventional real estate. In addition to standard construction, lease-up, and interest rate risk, investors must underwrite power delivery risk, technology obsolescence risk from short GPU refresh cycles, and growing community opposition risk. The preferred tenant profile has also shifted from diversified rent rolls toward single hyperscale tenants with take-or-pay lease structures, because their power commitments are what makes a project financeable at institutional scale.